(IN BRIEF) Researchers at the Technical University of Munich have developed a robot capable of locating misplaced objects by combining three-dimensional vision with language models that encode contextual knowledge about everyday environments. The robot constructs an accurate spatial map of its surroundings and uses information from language models to determine where an item is most likely to be found, allowing it to search rooms far more efficiently than random scanning. By assigning probability scores to different locations, the robot can prioritize likely search areas and identify changes in its environment with high accuracy. The technology demonstrates how visual perception and language-based reasoning can be integrated to improve robotic autonomy. Future developments will focus on enabling robots to physically interact with their surroundings, allowing them to search inside cupboards and drawers. The research contributes to broader efforts at TUM to develop intelligent robots capable of operating in complex real-world environments.

(PRESS RELEASE) MUNICH, 12-Mar-2026 — /EuropaWire/ — Technical University of Munich (TUM) researchers have developed a robot capable of locating misplaced objects by combining three-dimensional vision with knowledge derived from language models. The system integrates information gathered from its surroundings with contextual knowledge to determine where an object is most likely to be found, enabling it to search more efficiently.

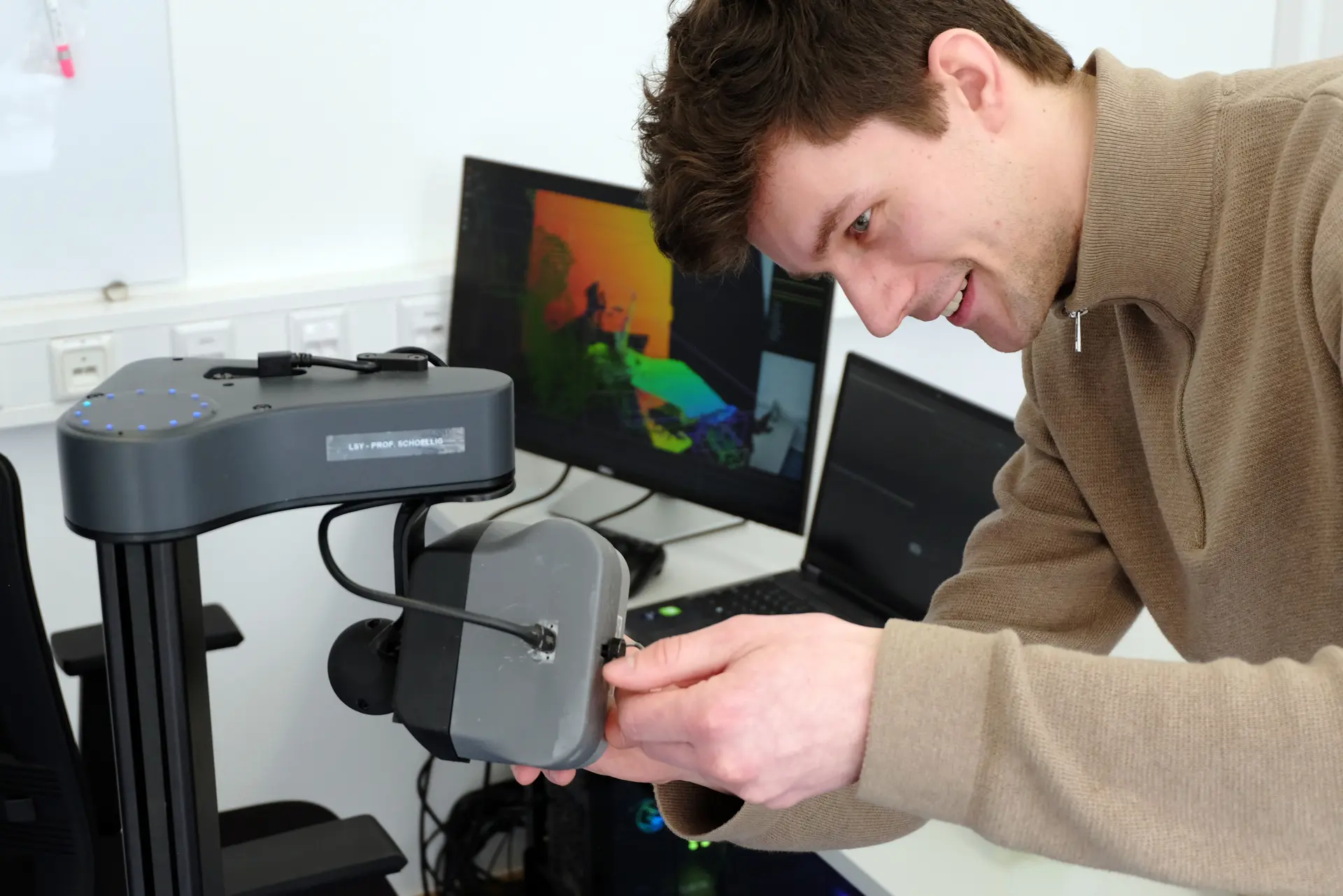

The robot, created at Prof. Angela Schoellig’s Learning Systems and Robotics Lab, is designed as a mobile platform with a camera mounted on top. While visually simple in appearance, the machine represents a significant step forward in robotics because it combines visual perception with reasoning capabilities that help it understand how objects relate to everyday environments.

To locate an item such as a misplaced pair of glasses, the robot first scans its surroundings and generates a detailed spatial map of the room. Although the camera initially captures two-dimensional images, each pixel contains depth information that allows the system to reconstruct a precise three-dimensional representation of the environment. This spatial model is updated continuously, providing centimeter-level accuracy. A connected laptop processes the images to determine which objects are visible and how they relate to human activity.

According to Angela Schoellig, the project aims to equip robots with the ability to interpret their environments in a meaningful way. Developing this kind of contextual awareness is essential for machines that must operate autonomously in real-world settings where layouts and objects frequently change. The approach is particularly relevant for future applications such as humanoid robots working in industrial facilities or robotic assistants operating in domestic care environments.

A key element of the system is its ability to translate general knowledge from language models into instructions the robot can use. For instance, the robot understands that everyday items like glasses are commonly placed on surfaces such as tables or window sills, while locations such as stovetops or sinks are unlikely storage spots. The language model identifies relationships between objects and environments, and this information is converted into a format that the robot can incorporate into its spatial map.

Within the map, the robot assigns numerical probabilities to different locations, continuously recalculating where the searched object is most likely to appear. As a result, the robot prioritizes high-probability areas rather than scanning the room randomly. Tests conducted by the research team show that this approach allows the robot to locate objects nearly 30 percent more efficiently compared with random search strategies. Artificial intelligence plays a dual role in this system: it powers both the visual recognition process and the contextual reasoning provided by the language model.

Another important capability of the robot is its ability to remember previously captured images of its environment. By comparing past and current images, the system can detect changes within the room. If a new object suddenly appears in the scene, the robot identifies the difference with an accuracy of around 95 percent and marks the area as a highly probable location for the item being searched.

The research team is already planning the next stage of development. Future versions of the robot will be designed to search inside cupboards, drawers and other enclosed spaces. Achieving this will require the robot to interact physically with its surroundings rather than simply observing them.

To accomplish this, the robot will need robotic arms and hands capable of opening doors and drawers while understanding how different mechanisms work. It must determine how a cupboard opens, identify handles and apply the correct motion to manipulate them. These capabilities would enable robots to perform more complex search tasks in everyday environments where objects are often hidden from view.

The research behind the system is detailed in the scientific paper Where did I leave my glasses? Open-Vocabulary Semantic Exploration in Real-World Semi-Static Environments, authored by Benjamin Bogenberger, Oliver Harrison, Orrin Dahanaggamaarachchi, Lukas Brunke, Jingxing Qian, Siqi Zhou and Angela P. Schoellig. The study was published on March 3, 2026 in IEEE Robotics and Automation Letters.

The work is also connected to the Munich Institute of Robotics and Machine Intelligence (TUM MIRMI), an interdisciplinary research institute at TUM dedicated to advancing robotics and artificial intelligence. The institute brings together expertise from nearly 80 university chairs to develop innovative robotic and AI-driven technologies across areas including healthcare, environmental monitoring, mobility, work, and security.

Publications

Where did I leave my glasses? Open-Vocabulary Semantic Exploration in Real-World Semi-Static Environments; Benjamin Bogenberger, Oliver Harrison, Orrin Dahanaggamaarachchi, Lukas Brunke, Jingxing Qian, Siqi Zhou, Angela P. Schoellig; IEEE Robotics and Automation Letters, 3. März 2026; ieeexplore.ieee.org/document/11359697

Further information and links

- Scientific video: https://utiasdsl.github.io/semi-static-semantic-exploration/

- Prof. Angela Schoellig is a member of the board of the Munich Institute of Robotics and Machine Intelligence (TUM MIRMI). The institute is an integrative research institute at the Technical University of Munich (TUM) that focuses on robotics and AI. The institute brings together expertise in key areas of robotics, including perception and data science. Nearly 80 TUM chairs are networked within MIRMI to develop innovative robotic and AI-supported solutions for the environment, health, mobility, work, as well as security and defense. TUM MIRMI is headed by Prof. Lorenzo Masia. Further information can be found at www.mirmi.tum.de.

Media Contacts:

Corporate Communications Center

Andreas Schmitz

presse@tum.de

Contacts to this article:

Prof. Angela Schoellig

Chair of Safety, Performance and Reliability for Learning Systems

Technical University of Munich (TUM)

angela.schoellig@tum.de

SOURCE: Technical University of Munich

MORE ON TECHNICAL UNIVERSITY OF MUNICH, TUM, ETC.:

- Digi Communications NV announces decision of the National Authority for Management and Regulation in Communications (ANCOM)

- Digi Communications NV announces 2026 AGM convocation

- Digi Communications NV announces availability of Q1 2026 financial report

- Digi Communications NV announces investors call for the presentation of the Q1 2026 financial results

- Digi Communications N.V. announces an amendment to the 2026 Financial Calendar

- Digi Communications N.V. announces availability of the Romanian-language ESEF version of the 2025 Annual Report

- Digi Communications N.V. announces availability of the non-statutory consolidated financial statements of Digi Romania S.A. for the year ended 31 December 2025

- Digi Communications N.V. announces availability of 2025 Financial report

- Digi Communications N.V. announces 2025 Financial Year dividend proposal

- Gestionar proyectos de IA con confianza: el PMI lanza la certificación PMI-CPMAI en español, que ofrece pasos prácticos para llevar a cabo con éxito proyectos de IA a los profesionales del sector en España

- Digi Communications N.V. announces status update on the potential Digi Spain Telecom S.A.U. transaction

- Digi Communications N.V. announces registration of the financial instruments resulting from the share capital increase

- Orivante Holdings Deploys AI Tools to Broaden Investor Access in Litigation Finance

- Free ICT Europe Warns of “Sovereignty Gap” in Enterprise ICT

- Europeans demand control over their digital identity

- Digi Communications N.V. announces Decision of the Board of Directors regarding the issuance of new shares

- Digi Communications N.V. announces acquisition of a 51% shareholding in Whyfibre Limited

- Digi Communications N.V. announces extraordinary general meeting’s resolution from 20 March 2026, approving the authority of the Board to issue shares on account of the Company’s retained earnings and general reserves and partially amend the Company’s articles of association

- Proteins Mosaic Q: a citizen-science project to gather evidence for a novel 3D protein structural pattern

- Digi Communications N.V. announces Registration with the FSA of the financial instruments resulting from the conversion of 16,974 A shares into an equal number of class B shares

- Digi Communications NV announces update regarding the live stream link for the Capital Markets Day 2026

- Samsung Electronics America selected EYEONIX’s COMMAND for Presentation in the United States

- Digi Communications NV announces Capital Markets Day 2026 Madrid

- Digi Communications N.V. reports preliminary consolidated revenues of 2,2 billion euros in 2025, a 15% year-over-year increase

- Digi Communications N.V. announces the resolution of the Board of Directors to convert class A shares into an equal number of class B shares for the purpose of distribution in accordance with an ongoing stock option plan

- Digi Communications NV announces Investors call for the presentation of the 2025 preliminary financial results

- Digi Communications N.V. announces Capital Markets Day 2026

- Digi Communications N.V. announces Convening of the Company’s general shareholders extraordinary meeting for 20 March 2026, for the approval of, among others, the authorization of the Board of Directors to issue shares

- EPP Pricing Platform announces leadership transition to support long-term continuity and growth

- BEISPIELLOSER SCHRITT: ZEE ENTERTAINMENT UK STARTET SEIN FLAGGSCHIFF ZEE TV MIT LIVE-UNTERTITELN IN DEUTSCHER SPRACHE AUF SAMSUNG TV PLUS IN DEUTSCHLAND, ÖSTERREICH UND DER SCHWEIZ

- Netmore Acquires Actility to Lead Global Transformation of Massive IoT

- Digi Communications N.V. announces the release of 2026 Financial Calendar

- Digi Communications N.V. announces availability of the report on corporate income tax information for the financial year ending December 31, 2024

- Oznámení o nadcházejícím vyhlášení rozsudku Evropského soudu pro lidská práva proti České republice ve čtvrtek dne 18. prosince 2025 ve věci důvěrnosti komunikace mezi advokátem a jeho klientem

- TrustED kicks off pilot phase following a productive meeting in Rome

- Gstarsoft consolida su presencia europea con una participación estratégica en BIM World Munich y refuerza su compromiso a largo plazo con la transformación digital del sector AEC

- Gstarsoft conforte sa présence européenne avec une participation dynamique à BIM World Munich et renforce son engagement à long terme auprès de ses clients

- Gstarsoft stärkt seine Präsenz in Europa mit einem dynamischen Auftritt auf der BIM World Munich und bekräftigt sein langfristiges Engagement für seine Kunden

- Digi Communications N.V. announces Bucharest Court of Appeal issued a first instance decision acquitting Digi Romania S.A., its current and former directors, as well as the other parties involved in the criminal case which was the subject matter of the investigation conducted by the Romanian National Anticorruption Directorate

- Digi Communications N.V. announces the release of the Q3 2025 financial report

- Digi Communications N.V. announces the admission to trading on the regulated market operated by Euronext Dublin of the offering of senior secured notes by Digi Romania

- Digi Communications NV announces Investors Call for the presentation of the Q3 2025 Financial Results

- Rise Point Capital invests in Run2Day; Robbert Cornelissen appointed CEO and shareholder

- Digi Communications N.V. announces the successful closing of the offering of senior secured notes due 2031 by Digi Romania

- BioNet Achieves EU-GMP Certification for its Pertussis Vaccine

- Digi Communications N.V. announces the upsize and successful pricing of the offering of senior secured notes by Digi Romania

- Hidora redéfinit la souveraineté du cloud avec Hikube : la première plateforme cloud 100% Suisse à réplication automatique sur trois data centers

- Digi Communications N.V. announces launch of senior secured notes offering by Digi Romania. Conditional full redemption of all outstanding 2028 Notes issued on 5 February 2020

- China National Tourist Office in Los Angeles Spearheads China Showcase at IMEX America 2025 ↗️

- China National Tourist Office in Los Angeles Showcases Mid-Autumn Festival in Arcadia, California Celebration ↗️

- Myeloid Therapeutics Rebrands as CREATE Medicines, Focused on Transforming Immunotherapy Through RNA-Based In Vivo Multi-Immune Programming

- BevZero South Africa Invests in Advanced Paarl Facility to Drive Quality and Innovation in Dealcoholized Wines

- Plus qu’un an ! Les préparatifs pour la 48ème édition des WorldSkills battent leur plein

- Digi Communications N.V. announces successful completion of the FTTH network investment in Andalusia, Spain

- Digi Communications N.V. announces Completion of the Transaction regarding the acquisition of Telekom Romania Mobile Communications’ prepaid business and certain assets

- Sparkoz concludes successful participation at CMS Berlin 2025

- Digi Communications N.V. announces signing of the business and asset transfer agreement between DIGI Romania, Vodafone Romania, Telekom Romania Mobile Communications, and Hellenic Telecommunications Organization

- Sparkoz to showcase next-generation autonomous cleaning robots at CMS Berlin 2025

- Digi Communications N.V. announces clarifications on recent press articles regarding Digi Spain S.L.U.

- Netmore Assumes Commercial Operations of American Tower LoRaWAN Network in Brazil in Strategic Transition

- Cabbidder launches to make UK airport transfers and long-distance taxi journeys cheaper and easier for customers ↗️

- Robert Szustkowski appeals to the Prime Minister of Poland for protection amid a wave of hate speech

- Digi Communications NV announces the release of H1 2025 Financial Report

- Digi Communications NV announces “Investors Call for the presentation of the H1 2025 Financial Results”

- As Brands React to US Tariffs, CommerceIQ Offers Data-Driven Insights for Expansion Into European Markets

- Digi Communications N.V. announces „The Competition Council approves the acquisition of the assets and of the shares of Telekom Romania Mobile Communications by DIGI Romania and Vodafone Romania”

- HTR makes available engineering models of full-metal elastic Lunar wheels

- Tribunal de EE.UU. advierte a Ricardo Salinas: cumpla o enfrentará multas y cárcel por desacatoo

- Digi Communications N.V. announces corporate restructuring of Digi Group’s affiliated companies in Belgium

- Aortic Aneurysms: EU-funded Pandora Project Brings In-Silico Modelling to Aid Surgeons

- BREAKING NEWS: New Podcast “Spreading the Good BUZZ” Hosted by Josh and Heidi Case Launches July 7th with Explosive Global Reach and a Mission to Transform Lives Through Hope and Community in Recovery

- Cha Cha Cha kohtub krüptomaailmaga: Winz.io teeb koostööd Euroopa visionääri ja staari Käärijäga

- Digi Communications N.V. announces Conditional stock options granted to Executive Directors of the Company, for the year 2025, based on the general shareholders’ meeting approval from 25 June 20244

- Cha Cha Cha meets crypto: Winz.io partners with European visionary star Käärijä

- Digi Communications N.V. announces the exercise of conditional share options by the executive directors of the Company, for the year 2024, as approved by the Company’s OGSM from 25 June 2024

- “Su Fortuna Se Ha Construido A Base de La Defraudación Fiscal”: Críticas Resurgen Contra Ricardo Salinas en Medio de Nuevas Revelaciones Judiciales y Fiscaleso

- Digi Communications N.V. announces the availability of the instruction regarding the payment of share dividend for the 2024 financial year

- SOILRES project launches to revive Europe’s soils and future-proof farming

- Josh Case, ancien cadre d’ENGIE Amérique du Nord, PDG de Photosol US Renewable Energy et consultant d’EDF Amérique du Nord, engage aujourd’hui toute son énergie dans la lutte contre la dépendance

- Bizzy startet den AI Sales Agent in Deutschland: ein intelligenter Agent zur Automatisierung der Vertriebspipeline

- Bizzy lance son agent commercial en France : un assistant intelligent qui automatise la prospection

- Bizzy lancia l’AI Sales Agent in Italia: un agente intelligente che automatizza la pipeline di vendita

- Bizzy lanceert AI Sales Agent in Nederland: slimme assistent automatiseert de sales pipeline

- Bizzy startet AI Sales Agent in Österreich: ein smarter Agent, der die Sales-Pipeline automatisiert

- Bizzy wprowadza AI Sales Agent w Polsce: inteligentny agent, który automatyzuje budowę lejka sprzedaży

- Bizzy lanza su AI Sales Agent en España: un agente inteligente que automatiza la generación del pipeline de ventas

- Bizzy launches AI Sales Agent in the UK: a smart assistant that automates sales pipeline generation

- As Sober.Buzz Community Explodes Its Growth Globally it is Announcing “Spreading the Good BUZZ” Podcast Hosted by Josh Case Debuting July 7th

- Digi Communications N.V. announces the OGMS resolutions and the availability of the approved 2024 Annual Report

- Escándalo Judicial en Aumento Alarma a la Opinión Pública: Suprema Corte de México Enfrenta Acusaciones de Favoritismo hacia el Aspirante a Magnate Ricardo Salinas Pliego

- Winz.io Named AskGamblers’ Best Casino 2025

- Kissflow Doubles Down on Germany as a Strategic Growth Market with New AI Features and Enterprise Focus

- Digi Communications N.V. announces Share transaction made by a Non-Executive Director of the Company with class B shares

- Salinas Pliego Intenta Frenar Investigaciones Financieras: UIF y Expertos en Corrupción Prenden Alarmas

- Digital integrity at risk: EU Initiative to strengthen the Right to be forgotten gains momentum

- Orden Propuesta De Arresto E Incautación Contra Ricardo Salinas En Corte De EE.UU

- Digi Communications N.V. announced that Serghei Bulgac, CEO and Executive Director, sold 15,000 class B shares of the company’s stock

- PFMcrypto lancia un sistema di ottimizzazione del reddito basato sull’intelligenza artificiale: il mining di Bitcoin non è mai stato così facile

- Azteca Comunicaciones en Quiebra en Colombia: ¿Un Presagio para Banco Azteca?

- OptiSigns anuncia su expansión Europea

- OptiSigns annonce son expansion européenne

- OptiSigns kündigt europäische Expansion an

- OptiSigns Announces European Expansion

- Digi Communications NV announces release of Q1 2025 financial report

- Banco Azteca y Ricardo Salinas Pliego: Nuevas Revelaciones Aumentan la Preocupación por Riesgos Legales y Financieros

- Digi Communications NV announces Investors Call for the presentation of the Q1 2025 Financial Results

- Digi Communications N.V. announces the publication of the 2024 Annual Financial Report and convocation of the Company’s general shareholders meeting for June 18, 2025, for the approval of, among others, the 2024 Annual Financial Report, available on the Company’s website

- La Suprema Corte Sanciona a Ricardo Salinas de Grupo Elektra por Obstrucción Legal

- Digi Communications N.V. announces the conclusion of an Incremental to the Senior Facilities Agreement dated 21 April 2023

- 5P Europe Foundation: New Initiative for African Children

- 28-Mar-2025: Digi Communications N.V. announces the conclusion of Facilities Agreements by companies within Digi Group

- Aeroluxe Expeditions Enters U.S. Market with High-Touch Private Jet Journeys—At a More Accessible Price ↗️

- SABIO GROUP TAKES IT’S ‘DISRUPT’ CX PROGRAMME ACROSS EUROPE

- EU must invest in high-quality journalism and fact-checking tools to stop disinformation

- ¿Está Banco Azteca al borde de la quiebra o de una intervención gubernamental? Preocupaciones crecientes sobre la inestabilidad financiera

- Netmore and Zenze Partner to Deploy LoRaWAN® Networks for Cargo and Asset Monitoring at Ports and Terminals Worldwide

- Rise Point Capital: Co-investing with Independent Sponsors to Unlock International Investment Opportunities

- Netmore Launches Metering-as-a-Service to Accelerate Smart Metering for Water and Gas Utilities

- Digi Communications N.V. announces that a share transaction was made by a Non-Executive Director of the Company with class B shares

- La Ballata del Trasimeno: Il Mediometraggio si Trasforma in Mini Serie

- Digi Communications NV Announces Availability of 2024 Preliminary Financial Report

- Digi Communications N.V. announces the recent evolution and performance of the Company’s subsidiary in Spain

- BevZero Equipment Sales and Distribution Enhances Dealcoholization Capabilities with New ClearAlc 300 l/h Demonstration Unit in Spain Facility

- Digi Communications NV announces Investors Call for the presentation of the 2024 Preliminary Financial Results

- Reuters webinar: Omnibus regulation Reuters post-analysis

- Patients as Partners® Europe Launches the 9th Annual Event with 2025 Keynotes, Featured Speakers and Topics

- eVTOLUTION: Pioneering the Future of Urban Air Mobility

- Reuters webinar: Effective Sustainability Data Governance

- Las acusaciones de fraude contra Ricardo Salinas no son nuevas: una perspectiva histórica sobre los problemas legales del multimillonario

- Digi Communications N.V. Announces the release of the Financial Calendar for 2025

- USA Court Lambasts Ricardo Salinas Pliego For Contempt Of Court Order

- 3D Electronics: A New Frontier of Product Differentiation, Thinks IDTechEx

- Ringier Axel Springer Polska Faces Lawsuit for Over PLN 54 million

- Digi Communications N.V. announces the availability of the report on corporate income tax information for the financial year ending December 31, 2023

- Unlocking the Multi-Million-Dollar Opportunities in Quantum Computing

- Digi Communications N.V. Announces the Conclusion of Facilities Agreements by Companies within Digi Group

- The Hidden Gem of Deep Plane Facelifts

- KAZANU: Redefining Naturist Hospitality in Saint Martin ↗️

- New IDTechEx Report Predicts Regulatory Shifts Will Transform the Electric Light Commercial Vehicle Market

- Almost 1 in 4 Planes Sold in 2045 to be Battery Electric, Finds IDTechEx Sustainable Aviation Market Report

- Digi Communications N.V. announces the release of Q3 2024 financial results

- Digi Communications NV announces Investors Call for the presentation of the Q3 2024 Financial Results

- Pilot and Electriq Global announce collaboration to explore deployment of proprietary hydrogen transport, storage and power generation technology

- Digi Communications N.V. announces the conclusion of a Memorandum of Understanding by its subsidiary in Romania

- Digi Communications N.V. announces that the Company’s Portuguese subsidiary finalised the transaction with LORCA JVCO Limited

- Digi Communications N.V. announces that the Portuguese Competition Authority has granted clearance for the share purchase agreement concluded by the Company’s subsidiary in Portugal

- OMRON Healthcare introduceert nieuwe bloeddrukmeters met AI-aangedreven AFib-detectietechnologie; lancering in Europa september 2024

- OMRON Healthcare dévoile de nouveaux tensiomètres dotés d’une technologie de détection de la fibrillation auriculaire alimentée par l’IA, lancés en Europe en septembre 2024

- OMRON Healthcare presenta i nuovi misuratori della pressione sanguigna con tecnologia di rilevamento della fibrillazione atriale (AFib) basata sull’IA, in arrivo in Europa a settembre 2024

- OMRON Healthcare presenta los nuevos tensiómetros con tecnología de detección de fibrilación auricular (FA) e inteligencia artificial (IA), que se lanzarán en Europa en septiembre de 2024

- Alegerile din Moldova din 2024: O Bătălie pentru Democrație Împotriva Dezinformării

- Northcrest Developments launches design competition to reimagine 2-km former airport Runway into a vibrant pedestrianized corridor, shaping a new era of placemaking on an international scale

- The Road to Sustainable Electric Motors for EVs: IDTechEx Analyzes Key Factors

- Infrared Technology Breakthroughs Paving the Way for a US$500 Million Market, Says IDTechEx Report

- MegaFair Revolutionizes the iGaming Industry with Skill-Based Games

- European Commission Evaluates Poland’s Media Adherence to the Right to be Forgotten

- Global Race for Autonomous Trucks: Europe a Critical Region Transport Transformation

- Digi Communications N.V. confirms the full redemption of €450,000,000 Senior Secured Notes

- AT&T Obtiene Sentencia Contra Grupo Salinas Telecom, Propiedad de Ricardo Salinas, Sus Abogados se Retiran Mientras Él Mueve Activos Fuera de EE.UU. para Evitar Pagar la Sentencia

- Global Outlook for the Challenging Autonomous Bus and Roboshuttle Markets

- Evolving Brain-Computer Interface Market More Than Just Elon Musk’s Neuralink, Reports IDTechEx

- Latin Trails Wraps Up a Successful 3rd Quarter with Prestigious LATA Sustainability Award and Expands Conservation Initiatives ↗️

- Astor Asset Management 3 Ltd leitet Untersuchung für potenzielle Sammelklage gegen Ricardo Benjamín Salinas Pliego von Grupo ELEKTRA wegen Marktmanipulation und Wertpapierbetrug ein

- Digi Communications N.V. announces that the Company’s Romanian subsidiary exercised its right to redeem the Senior Secured Notes due in 2025 in principal amount of €450,000,000

- Astor Asset Management 3 Ltd Inicia Investigación de Demanda Colectiva Contra Ricardo Benjamín Salinas Pliego de Grupo ELEKTRA por Manipulación de Acciones y Fraude en Valores

- Astor Asset Management 3 Ltd Initiating Class Action Lawsuit Inquiry Against Ricardo Benjamín Salinas Pliego of Grupo ELEKTRA for Stock Manipulation & Securities Fraud

- Digi Communications N.V. announced that its Spanish subsidiary, Digi Spain Telecom S.L.U., has completed the first stage of selling a Fibre-to-the-Home (FTTH) network in 12 Spanish provinces

- Natural Cotton Color lancia la collezione "Calunga" a Milano

- Astor Asset Management 3 Ltd: Salinas Pliego Incumple Préstamo de $110 Millones USD y Viola Regulaciones Mexicanas

- Astor Asset Management 3 Ltd: Salinas Pliego Verstößt gegen Darlehensvertrag über 110 Mio. USD und Mexikanische Wertpapiergesetze

- ChargeEuropa zamyka rundę finansowania, której przewodził fundusz Shift4Good tym samym dokonując historycznej francuskiej inwestycji w polski sektor elektromobilności

- Strengthening EU Protections: Robert Szustkowski calls for safeguarding EU citizens’ rights to dignity

- Digi Communications NV announces the release of H1 2024 Financial Results

- Digi Communications N.V. announces that conditional stock options were granted to a director of the Company’s Romanian Subsidiary

- Digi Communications N.V. announces Investors Call for the presentation of the H1 2024 Financial Results

- Digi Communications N.V. announces the conclusion of a share purchase agreement by its subsidiary in Portugal

- Digi Communications N.V. Announces Rating Assigned by Fitch Ratings to Digi Communications N.V.

- Digi Communications N.V. announces significant agreements concluded by the Company’s subsidiaries in Spain

- SGW Global Appoints Telcomdis as the Official European Distributor for Motorola Nursery and Motorola Sound Products

- Digi Communications N.V. announces the availability of the instruction regarding the payment of share dividend for the 2023 financial year

- Digi Communications N.V. announces the exercise of conditional share options by the executive directors of the Company, for the year 2023, as approved by the Company’s Ordinary General Shareholders’ Meetings from 18th May 2021 and 28th December 2022

- Digi Communications N.V. announces the granting of conditional stock options to Executive Directors of the Company based on the general shareholders’ meeting approval from 25 June 2024

- Digi Communications N.V. announces the OGMS resolutions and the availability of the approved 2023 Annual Report

- Czech Composer Tatiana Mikova Presents Her String Quartet ‘In Modo Lidico’ at Carnegie Hall

- SWIFTT: A Copernicus-based forest management tool to map, mitigate, and prevent the main threats to EU forests

- WickedBet Unveils Exciting Euro 2024 Promotion with Boosted Odds

- Museum of Unrest: a new space for activism, art and design

- Digi Communications N.V. announces the conclusion of a Senior Facility Agreement by companies within Digi Group

- Digi Communications N.V. announces the agreements concluded by Digi Romania (formerly named RCS & RDS S.A.), the Romanian subsidiary of the Company

- Green Light for Henri Hotel, Restaurants and Shops in the “Alter Fischereihafen” (Old Fishing Port) in Cuxhaven, opening Summer 2026

- Digi Communications N.V. reports consolidated revenues and other income of EUR 447 million, adjusted EBITDA (excluding IFRS 16) of EUR 140 million for Q1 2024

- Digi Communications announces the conclusion of Facilities Agreements by companies from Digi Group

- Digi Communications N.V. Announces the convocation of the Company’s general shareholders meeting for 25 June 2024 for the approval of, among others, the 2023 Annual Report

- Digi Communications NV announces Investors Call for the presentation of the Q1 2024 Financial Results

- Digi Communications intends to propose to shareholders the distribution of dividends for the fiscal year 2023 at the upcoming General Meeting of Shareholders, which shall take place in June 2024

- Digi Communications N.V. announces the availability of the Romanian version of the 2023 Annual Report

- Digi Communications N.V. announces the availability of the 2023 Annual Report

- International Airlines Group adopts Airline Economics by Skailark ↗️

- BevZero Spain Enhances Sustainability Efforts with Installation of Solar Panels at Production Facility

- Digi Communications N.V. announces share transaction made by an Executive Director of the Company with class B shares

- BevZero South Africa Achieves FSSC 22000 Food Safety Certification

- Digi Communications N.V.: Digi Spain Enters Agreement to Sell FTTH Network to International Investors for Up to EUR 750 Million

- Patients as Partners® Europe Announces the Launch of 8th Annual Meeting with 2024 Keynotes and Topics

- driveMybox continues its international expansion: Hungary as a new strategic location

- Monesave introduces Socialised budgeting: Meet the app quietly revolutionising how users budget

- Digi Communications NV announces the release of the 2023 Preliminary Financial Results

- Digi Communications NV announces Investors Call for the presentation of the 2023 Preliminary Financial Results

- Lensa, един от най-ценените търговци на оптика в Румъния, пристига в България. Първият шоурум е открит в София

- Criando o futuro: desenvolvimento da AENO no mercado de consumo em Portugal

- Digi Communications N.V. Announces the release of the Financial Calendar for 2024

- Customer Data Platform Industry Attracts New Participants: CDP Institute Report

- eCarsTrade annonce Dirk Van Roost au poste de Directeur Administratif et Financier: une décision stratégique pour la croissance à venir

- BevZero Announces Strategic Partnership with TOMSA Desil to Distribute equipment for sustainability in the wine industry, as well as the development of Next-Gen Dealcoholization technology

- Editor's pick archive....